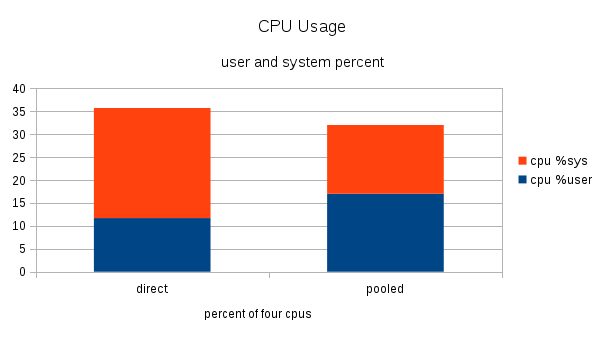

The point of using asynchronous launches from the CPU is to allow the CPU to send commands to the GPU and then perform other tasks while the GPU executes the commands.įigure 1. The long gap between the 10th API call and the 10th launch would be a high latency, but an intentional one. But the 10th kernel in the sequence might have a large launch latency by this definition, because the CPU enqueued the 10 launch commands and returned quickly, while the GPU has to complete the first nine kernels before it can start the 10th.

You’d expect minimal latency from the first API call to the first kernel execution. Imagine that you make 10 short API calls to launch a dependent sequence of 10 large kernels. Latency is not always bad in asynchronous systems. In this post, we mostly talk about launch latency. Task latency, or total time, is the time between adding a task to the queue and the task finishing. This definition includes the time of the launch API call. There are about 20 µs of launch latency in Figure 1 between the beginning of the launch call (in the CUDA API row) and the beginning of the kernel execution (in the CUDA Tesla V100-SXM row). For example, CUDA kernel launch latency could be defined as the time range from the beginning of the launch API call to the beginning of the kernel execution. Launch latency, sometimes called induction time, is the time between requesting an asynchronous task and beginning to execute it. There are two common definitions of latency.

First, here are some definitions of latency and overhead regarding CUDA.